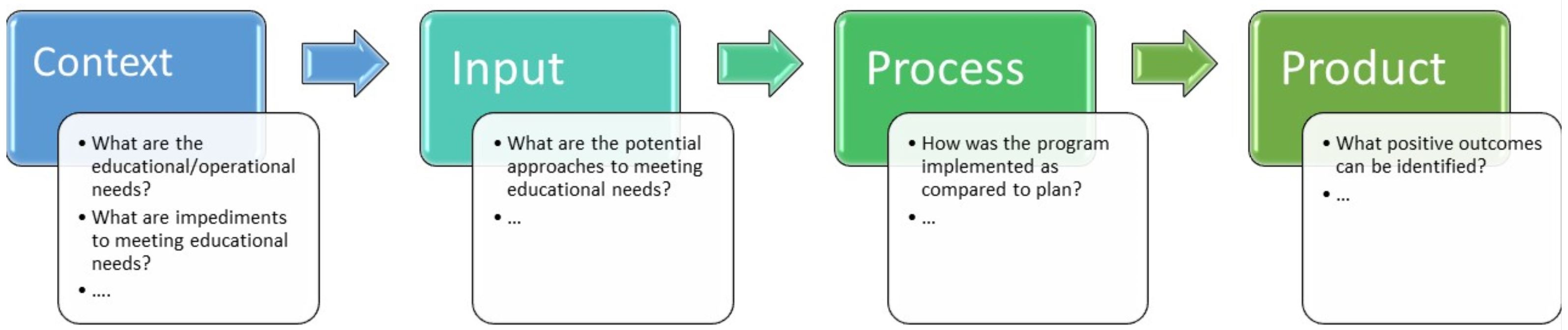

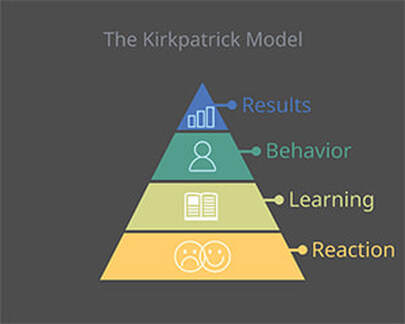

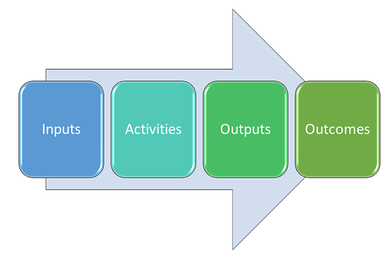

The Mindset for Program Evaluation  Kevin, my better half, is an expert gardener. He enjoys figuring out the most intricate watering systems, and we buy lots of tools and items I have never seen or heard of before. I love to share his joy about a thriving plant or a bountiful harvest. However, successful gardening involves serious planning and research. Anyone who gardens will tell you that there are seasons and cycles, and that the growth conditions must fit the plant to flourish. Timing of pruning, trimming, or fertilizing are crucial and can oftentimes not be determined until the next season and after the harvest. At that point, experiences turn into data. Educational programs are really living systems. However, as Cameron D. Norman writes on his blog, living systems, particularly human systems, are often complex in their nature. This complexity may include simple relationships as well as more complicated ones (Mennin, 2010; Frye & Hemmer, 2012). That notwithstanding, program evaluation is an obligation for anyone directing a medical education program, independent from its timeframe (Harden & Laidlaw, 2020). Any change to the program is related to the goals and strategies of the system in which the program is situated, and different stakeholders of the institution are affected in different ways – even if only on a small scale. Just consider that you may have primary goals for your educational program that are different from those of the senior dean, who needs you, e.g., to increase enrollment. Thus, it is important to align program and institutional goals with outcome measures for optimal program evaluation. As an example, at my home institution – the Zucker School of Medicine at Hofstra/Northwell – program objectives are listed in the Medical Student-as-Teacher elective syllabus, together with corresponding curricular drivers or guiding principles of our curricular design. This way, the outcome of the objectives satisfies, in part, the institutional goals. Program evaluations assist in our making decisions by generating data to improve the program in a purposeful rather than arbitrary manner. Comparable to a garden, circumstances change, and it helps to know the effectiveness of program components so they can be adjusted to changing conditions – such as the unexpected impact of COVID-19 on budgets, public relations, or emerging competition. Data also allow us to investigate the future of the program. Applying foresight, we can construct possible options and scenarios based on a set of program characteristics (e.g., enrollment, learning management system, etc.) that we may be able to manipulate and improve. In my October 2020 post, “Easy Guide to Program Development in Six Steps,“ I wrote that assessment of learners and program evaluation are related to each other. However, program evaluation seeks to deliver a judgment about the overall effectiveness, analyzing important components such as student satisfaction, innovative deliverables (e.g., novel learning modalities such as facilitated WhatsApp groups), or faculty evaluation. Methods of program evaluation must be carefully aligned with the objectives and effectiveness of such deliverables, which should be included when developing the program objectives. Wiggins and McTighe (1998) recognize that educators are usually guided by national or institutional standards that govern program evaluations. Thus, it is most effective to keep the desired results of the program in mind in the planning stage by connecting objectives with the methods of their evaluation (Wiggins, G., & McTighe, 1998; Goldie, 2006; Durning, Hemmer & Pangaro, 2007). Depending on the timing, stipulations, or resources available, these methods may include observation, surveys, questionnaires, interviews, or focus group sessions. Theories inform evaluation models such as the Logic Model, the CIPP (Context/Input/Process/Product), or Kirkpatrick’s four-level model, any of which you may choose depending on your evaluation process and aim of evaluation (Frye & Hemmer, 2012). As an example, a brief survey after each program session serves as quick feedback from your learners and takes little time and effort. Exit interviews require more resources but deliver granular data for specific program revisions and reports. Here are some ways to start your thinking: 1) Conduct a systematic literature search. A literature review of published programs of similar topics, scope, and design early in the planning phase will inspire evaluations of program objectives and identify best practices. Evaluations of the impact of innovative key components (e.g., novel interactive teaching tools) may emerge based on the literature search. It may be helpful to generate a fictitious research question (e.g., “what is the usefulness of WhatsApp groups in a clinical course”) to generate more search terms and provide you with published examples. It will also give you an idea about the timeframe, resources, and negotiation with stakeholders. 2) Identify the evaluator, the time frame, and the use of the outcome data. Keep in mind, there formative and summative evaluations. The purpose of formative evaluations is to identify strengths and weaknesses for program improvement. Formative evaluations provide information mostly to program directors inside the institution without final judgment. Summative evaluations, on the other hand, provide a final judgment and may be done externally. First and foremost, you and your team need to know how the program is performing and identify the most effective components and processes as well as opportunities for improvement. This implies two types of evaluation: process evaluations and outcomes evaluations. Process evaluations occur early in the beginning of a new program or midway through for possible course corrections. Outcome evaluations examine the end results to determine the impacts and effects of the program. Best practices and challenges and how you overcame barriers are valuable and should be shared with your educational community in publications. Second, your chair, dean, or president may also need to know how the program is going. Even if there is not an explicit request, it is prudent to have data on hand to be able to report briefly, if requested. Third, an external evaluation may take place. Any outside entity will likely request a self-study based on their own set of metrics and data points. And finally, keep in mind that oral reports from your learners, such as, e.g., final presentations that generate images, are also data that look great in reports or public relations communications. 3) Approaches, models, and theories … oh my! The design of your evaluation depends on the educational environment of the institution in which it is situated; the content; objectives; educational strategies and stakeholders; learner assessment; resources…… and many more considerations unique for your program (Harden & Laidlaw, 2020). Also, you may consider evaluating a broad scope of the program versus an in-depth examination of one or two deliverables of, e.g., a novel program component (Blanchard, Torbeck & Blondeau, 2013). As an example, the evaluation of a prevocational rural health training program in remote Australia in 2006 considered that only two practitioners per year were involved in the program and chose a qualitative analysis framework that examined reflective journals (Mak, Plant, & Toussaint, 2006). The bottom line: Whether choosing an individual model or a combination of models, the evaluation must be adequate for the program (Frye & Hemmer, 2012). Program evaluation models are theory based and ideally aligned with the program’s complexity. Self-study evaluations (e.g., to fulfill accreditation requests) are usually atheoretical (Blanchard, Torbeck & Blondeau, 2013). Theories that inform program evaluation models include (1) reductionism with a cause-effect linearity of “if-then” thinking; (2) general system theory with the understanding that program components interact with each other and are subject to change; and (3) complexity theory with the appreciation of uncertainty and diversity of interactions in and of systems.  Kirkpatrick’s and New World Kirkpatrick’s four-level evaluation model have been aligned with several workplace-based theories over time and use four criteria or levels of evaluation of training programs: Level 1 (Reaction); Level 2 (Learning); Level 3 (Behavior/on the-job Performance, transfer); and Level 4 (Results/organizational Impact) (Reio, Rocco, Smith, & Chang, 2017). Evaluating Levels 1 and 2 can reveal meaningful and actionable insights; however, common criticism includes the difficulty of evaluating Levels 3 and 4. In 2016, Kirkpatrick & Kirkpatrick coined the New World Kirkpatrick Model and expanded the scope to include engagement and relevance in Level 1, and confidence and commitment in Level 2 (Kirkpatrick & Kirkpatrick, 2016; Moreau, 2017).  Logic models are influenced by system theory, emphasize relationships between program components, and are composed of four main constituents in their simplest form: (1) Inputs, (2) Activities, (3) Outputs, and (4) Outcomes. The level of complexity of each component varies greatly between programs and, hence, models. A Logic Model’s inputs encompass all the resources necessary for implementation and operation. Activities include the strategies and changes. Outputs vary in size and indicate the completion of said activities or parts thereof, as well as products. Finally, outcomes define changes because of the activities and may, e.g., include demonstration of knowledge or skill acquisition. Logic models must clearly define the links between the components within the program’s context but also invite us to think with the end in mind (Frye & Hemmer, 2012). The CIPP (Context/Input/Process/Product) model was initially developed for program improvement and has since been used across a variety of settings (Stufflebeam & Shinkfield, 2007). The CIPP approach is consistent with both, systems theory as well as in part with complexity theory, and its components accommodate the ever-changing nature of programs. Each of the CIPP components requires an in-depth study that can deliver formative or summative evaluations through a variety of data collection methods. Inspired by Frye & Hemmer, 2012; adjusted.

Overall, a well-planned program evaluation provides you with a game plan toward improving your program. An open mind to challenges and changing contexts is key. However, many new evaluation frameworks emerge, and I encourage you to conduct mindful literature searches to develop an evaluation that not only works for your program but also drives it toward progress and success. References: Aung, K., Buer, T., Frederico-Martinez, G., Kahn, J., Lapiner, C., Shapiro, J., and Skarupski, K. (2020, October 21). Program evaluation and scholarship in faculty affairs and development: Mapping your design and analysis. Webinar. AAMC GFA Professional development conference. Blanchard, R. D., Torbeck, L., & Blondeau, W. (2013). AM last page: a snapshot of three common program evaluation approaches for medical education. Academic Medicine,88(1), 146. Durning, S. J., Hemmer, P., & Pangaro, L. N. (2007). The structure of program evaluation: an approach for evaluating a course, clerkship, or components of a residency or fellowship training program. Teaching and learning in medicine, 19(3), 308-318. Frye, A. W., & Hemmer, P. A. (2012). Program evaluation models and related theories: AMEE guide no. 67. Medical teacher, 34(5), e288-e299. Goldie, J. (2006). AMEE Education Guide no. 29: Evaluating educational programmes. Medical Teacher, 28(3), 210-224. Harden, R. M., & Laidlaw, J. M. (2020). Essential skills for a medical teacher: an introduction to teaching and learning in medicine. Elsevier Health Sciences. Kirkpatrick, J. D., & Kirkpatrick, W. K. (2016). Kirkpatrick's four levels of training evaluation. Association for Talent Development. Mak, D. B., Plant, A. J., & Toussaint, S. (2006). “I have learnt… a different way of looking at people's health”: an evaluation of a prevocational medical training program in public health medicine and primary health care in remote Australia. Medical Teacher, 28(6), e149-e155. Mennin, S. (2010). Complexity and health professions education. Journal of evaluation in clinical practice, 16(4), 835-837. Moreau, K. A. (2017). Has the new Kirkpatrick generation built a better hammer for our evaluation toolbox? Medical Teacher, 39(9), 999-1001. Norman C.D. (2017). Censemaking. Innovation at the speed of coffee [blog]. A mindset for developmental evaluation. Blog post. Retrieved at December 28, 2020. https://censemaking.com/2017/09/07/a-mindset-for-developmental-evaluation/ [living systems] Norman C.D. (2020)._Censemaking. Innovation at the speed of coffee [blog]. Causal Looks: Foresight Fundamentals. Blog post. Retrieved at December 28, 2020 https://censemaking.com/2020/06/18/causal-looks-foresight-fundamentals/ Reio, T. G., Rocco, T. S., Smith, D. H., & Chang, E. (2017). A critique of Kirkpatrick's evaluation model. New Horizons in Adult Education and Human Resource Development, 29(2), 35-53. Stufflebeam, D. L., & Shinkfield, A. J. (2007). CIPP Model for Evaluation: An improvement accountability approach. Evaluation theory, models and applications, 325-365. Wiggins, G., & McTighe, J. (1998). What is backward design. Understanding by design,1, 7-19. https://valenciacollege.edu/faculty/development/courses-resources/documents/WhatisBackwardDesignWigginsMctighe.pdf Zucker School of Medicine (2020). Guiding principles: https://medicine.hofstra.edu/education/md/index.html Zucker School of Medicine (2020). Curriculum drivers: https://medicine.hofstra.edu/education/md/curriculum-drivers.html

1 Comment

|